The first image that comes to my mind when I hear the word IBM is of bulky computer consoles, with 13-inch, monochrome monitors, that adorned the computer lab in my school. It was 20 years ago. At the IBM World of Watson (WOW), in Las Vegas this October, I had expected an expo of ultra-slim laptops, next-generation PCs and high-capacity pocket storage devices—being demonstrated by the men in black suits and white shirts—as the IBM song Ever Onward played in the background. I was wrong. There, a jar of candies listened to my preferences and offered candies, a vehicle welcomed me inside and drove off by itself, a robot started conversation with me, a dress understood emotions and changed colour, a bar suggested my favourite beer based on my preferences, and much more—all built on Watson platform.

Watson, the artificial intelligence technology developed by IBM, and named after the company’s founder Thomas J. Watson, has the power to perform like humans—to observe, interpret, evaluate and decide, unlike conventional computing which can handle only structured data, like what is available in a database. “Watson can understand unstructured data, and 80 per cent of data is unstructured. This includes literature, research reports, tweets, blogs, posts and more. Unlike a search engine, Watson breaks down a sentence grammatically, relationally and based on context,” said David Kenny, general manager, IBM Watson.

Watson was first introduced to the world in February 2011, in an American television quiz show called Jeopardy!. “In 2007, when I was approached on developing an artificial intelligence computing system for Jeopardy!, I was sceptical. Researchers had been trying over 15 years, but had failed. Developing a system that could deal with massive amounts of unstructured data was a challenge. In three years, we, however, developed and trained Watson to compete in Jeopardy!,” said John Kelly, senior vice president, cognitive solutions and research, IBM. Watson won the competition against two human players, Ken Jennings and Brad Rutter, and made a major breakthrough in creating a machine as smart as a human. This was only the beginning of next generation computing.

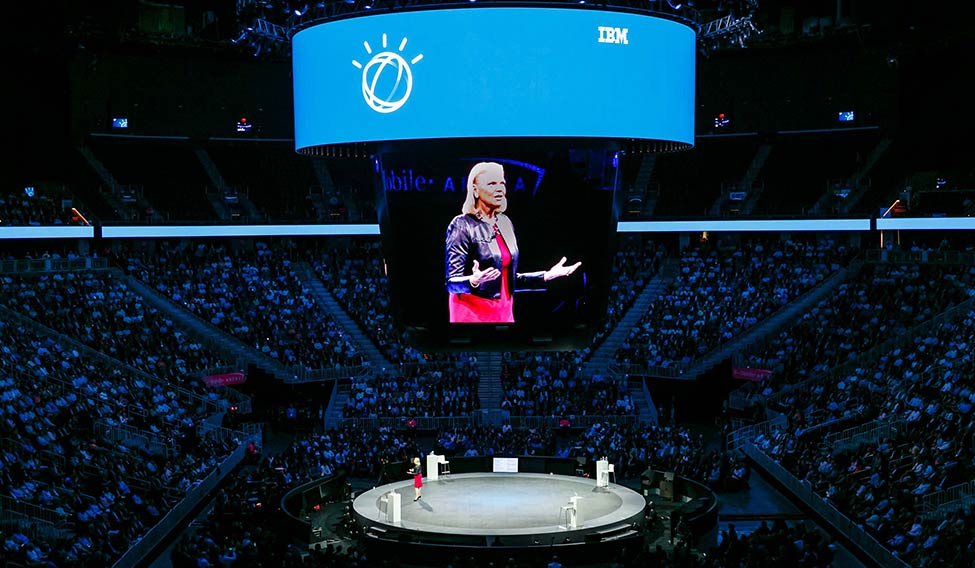

Future is here: IBM CEO Ginni Rometty delivers keynote address at the IBM World of Watson, in Las Vegas.

Future is here: IBM CEO Ginni Rometty delivers keynote address at the IBM World of Watson, in Las Vegas.

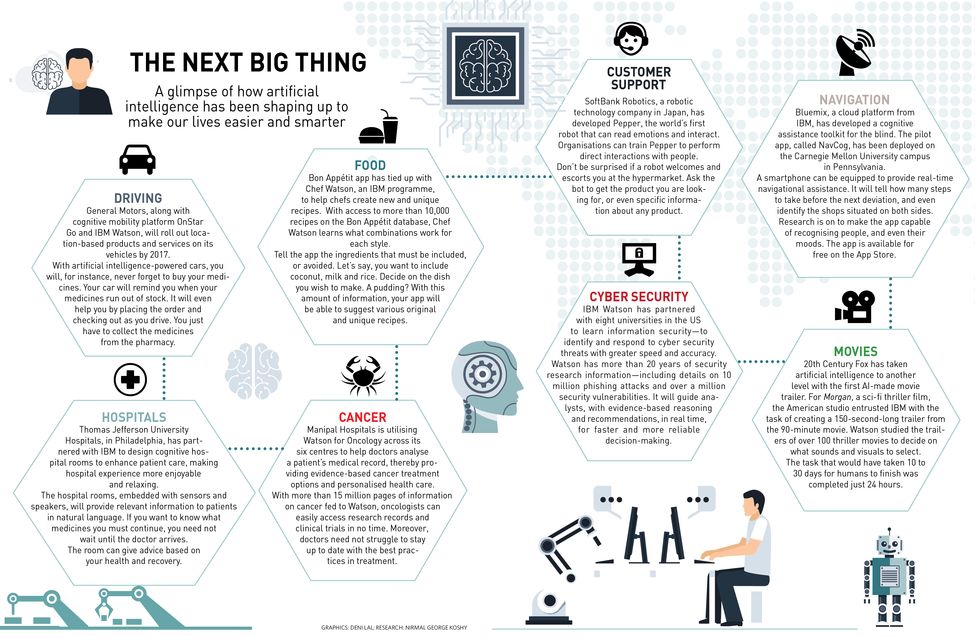

Since then, IBM has been deploying Watson’s intelligence in the real world—in health care, crime prevention, cyber security, education, aviation, weather prediction, fashion and transportation. The American pharmaceutical company Pfizer has taken monitoring of patients with Parkinson’s disease to a whole new level, with its partnership with IBM Watson. Sensors are attached to objects to draw information about the patient, like movement, sleep, grooming, dressing and eating. Sensors are embedded on surfaces from where activities can be recorded, for instance, on a chair. The sensors record the time taken for the patient to get up, the pressure applied on the chair, and even the sitting posture. From the millions of pieces of information available on Watson, it will monitor and assess the health of the person every second and predict risks. This revolution will standardise the tests and put an end to frequent hospital visits.

Watch: IBM beats humans in Jeopardy!

“In just five years Watson has grown from a question answering machine to a cloud technology that is used in over 45 countries, by more than 20 industries, in making important decisions,” said Kelly.

Watson can be trained to understand jargon and language of each industry. With the guidance of human experts, it gains knowledge on specific domain, and builds a library using available literature. “In the next five years, each and every industry will use Watson; and if I could predict, in 15 years, Watson will start predicting the future—days, weeks and months ahead,” said Kelly. For instance, Watson has been working closely with the US police department to predict crimes and prevent them. Watson’s predictive analytics tool collects and analyses vast amounts of real-time information it receives from surveillance cameras, gunshot and licence plate detectors and facial recognition systems, and provides insights to the police in no time. This helps the police to plan, rather than to react. Similarly, The Weather Company, a subsidiary of IBM, has been training Watson to predict natural catastrophes, and to provide air travellers with turbulent-free, safe travel.

David Kenny, general manager, IBM Watson

David Kenny, general manager, IBM Watson

“Where can I get some coffee?” I asked a lady who was holding a ‘May I help you?’ signboard.

“Why don’t you ask Pepper?” she said with a smile and pointed to a stall about 10 feet away.

I walked to the stall, and to my surprise I found no one, except a robot, three feet tall, with blue eyes.

“Hi,” I said.

Watch: AI makes song

“Hi, I am Pepper. Please ask me something,” said the bot.

“Where can I get some coffee?” I asked.

“Well, you will find a beverage stall at the southern side of the venue. Is there anything else you would like to know?”

“Yes, Pepper, what session do you recommend for me?”

“You shouldn’t miss Greens’s Watson Internet of Things keynote, happening at the Mandalay Bay ballroom at 3pm today.”

“And when is Ginni Rometty speaking?” I asked.

“The keynote by Ginni Rometty is on tomorrow at 1pm at the T-Mobile Arena.”

I got curious. I asked, “How did you win Jeopardy!?”

“I can understand natural language with a high degree of confidence. The rest is secret.”

“Can you tell a joke, Pepper?”

Watch: AI makes movie trailer

“Oh yeah. The past, present, and future walked into a bar. It was tense.”

Phew! These robots are bad with jokes, I said to myself.

“Thanks, Pepper,” I said.

“See you around. It was nice talking to you,” a courteous Pepper replied.

Pepper is a bot developed by SoftBank and Aldebaran Robotics. It is powered by IBM Watson and is trained to read emotions, analyse expressions and voice tones, and interact with clients and developers through artificial intelligence computing.

Watch: How AI predicts crime

Watson chatbots are built on natural language processing algorithms—that are capable of understanding the underlying intention and context of any communication.

“Millennials have no desire to call up 1-800 numbers, to try and find a phone number and go through that process. Customer engagement today is driven by the consumer wanting to get the questions answered or tasks done much faster and easier. That is one of the reasons why organisations are adopting conversational applications,” said Brian Loveys, program director for Watson Emerging Technologies.

Watch: Who is Pepper

“More has been done in cognitive computing in the last two years than in the last two decades,” said Ginni Rometty, president and CEO, IBM. “Watson has already started interacting with 200 million consumers—in shopping, insurance, weather, government services, travel plans and more. I am more than optimistic. It isn’t a world of Watson, but a world with Watson,” she said.

Still fascinated by Pepper, next I followed the crowd heading for a special bus ride. How special could a bus ride be, I wondered.

After waiting for about five minutes at the boarding point, a mini bus, with ‘Olli’ printed on all four sides, arrived and opened the door. As I was about to greet the driver after boarding, I realised there was no driver... not even a cockpit. The door closed.

“Hi, I’m Olli, your driver,” came a voice from nowhere.

Ride smart: Olli is the first 3-D printed, self-driving vehicle. The makers of Olli inside the vehicle.

Ride smart: Olli is the first 3-D printed, self-driving vehicle. The makers of Olli inside the vehicle.

Inside Olli, I met Joe Speed, Watson IoT AutoLab Product Owner, who explained to me about the vehicle I was in. Olli is the first 3D printed, self-driving, electric vehicle. It was IBM’s partnership with Local Motors that put Watson IoT into a vehicle. Olli is equipped with 30 sensors including two ladars (laser radars) for a 360-degree surveillance. It can seat up to 12 persons. The one I was in was an actual size prototype.

“The specialty of Olli is it speaks naturally and it understands natural language. There is no need for coding. With its cute name and natural voice, people can easily relate with Olli,” says Bret Greenstein, vice president, Watson IoT Platform Offerings.

Watch: How Olli drives

We started chatting.

I: Hi Olli, how fast can you go?

Olli: My maximum speed is 30 miles per hour. But I usually operate at 10 to 15 miles per hour.

I: And how far can you go?”

Olli: I can typically get 40 miles of power in a single charge.

As the conversation picked up pace, I asked: “Where can we get some biriyani?”

Watch: A dress that thinks

“That sounds good. I must try it myself. Your options are listed here. Tap one and I will take you there,” Olli said. The touch responsive screen displayed three restaurants nearby that served biriyani. I tapped on one of the options, and Olli warned: “I would suggest you take an umbrella, since there are chances of thunderstorm at the destination.” Olli has partnered with The Weather Company for weather forecasting.

Ask Sebastian Thrun, founder of Google X, why machines would make a better driver, and he would say: “When you make a mistake in driving, only you will learn from it. When an artificial intelligence-driven vehicle makes a mistake, all other AI-driven vehicles would learn from it at the same time, and that’s when AI wins over humans. Humans aren’t great at driving; they bump into each other, clock the same route at the same time, and pollute the earth.”

“Perception of what is possible has to come out faster. Too often people say, ‘Wouldn’t it be nice if, but they don’t even think that it is even possible, and yet that innovation is right around the corner’,” said John B. Rogers, CEO and co-founder of Local Motors. He added that Olli can make decisions 100 times faster than humans.

“The world of IoT is moving at lightning speed. The only way to stay ahead is by innovating as quickly as possible. That’s what Local Motors and IBM have been doing,” said Kal Gyimesi, automotive market lead, IBM Watson IoT.

As Speed said Olli has a soft and friendly design. “After the keynote address in Berlin, a month ago, people who came to the stage started hugging the bus. The people really loved Olli,” said Speed.

As I completed a ride on Olli, it dropped me at the exit point. However, my ego did not let a bot win the chat. As I was about to exit, I asked: “Olli, what do you think about the US presidential election?” It replied, “I would rather not comment since they don’t let vehicles vote yet.”

I exited from Olli.

When I was a child I had always wanted an attire with supernatural powers—a shirt that could make me invisible, a pair of shorts that could take me to places in no time, or a pair of shoes that could become jetpack. I could not find one then. IBM’s partnership with Marchesa, a brand in the US that specialises in luxury womenswear, has made part of it come true. Yes, a dress that can change its colour based on human emotions.

“Watson learns like humans, can look at texts, videos and images, grabbing what the fans are saying about the dress, and understand the emotions underneath,” said Jeffrey Arn, Watson marketing analytics strategist.

The idea of cognitive dress came up when Vogue suggested an unusual theme for Met Gala 2016—Manus x Machina (Man vs. Machine): Fashion in an Age of Technology. IBM and Marchesa joined hands to create a dress that is more than just about clothing. Marchesa selected five human emotions—joy, passion, excitement, encouragement and curiosity—that they wanted the dress to convey, and assigned a colour for each emotion.

“We fed hundreds of images of Marchesa dresses into Watson and Watson came back with guidelines on which direction we should go, which colour stories we should take, and it had been segregated into several emotions,” says Georgina Chapman, co-founder and designer, Marchesa.

During the Met Gala event, Watson analysed every tweet that was hash-tagged #CognitiveDress and decoded the underlying emotions. It then lit up 150 LED-connected flowers embedded on the dress, changing colours in real-time, in tune with the sentiments of users who were tweeting on the event.

“Seeing one of our dresses that can respond and communicate with humans was something we never thought we would ever see,” said Keren Craig, co-founder, Marchesa.

As I walked round the cognitive dress, getting a glimpse from all sides, I recalled David’s statement. “IBM is not your grandfather’s IBM anymore.”